|

|

|||||||||||||||||||||||||||||||

|

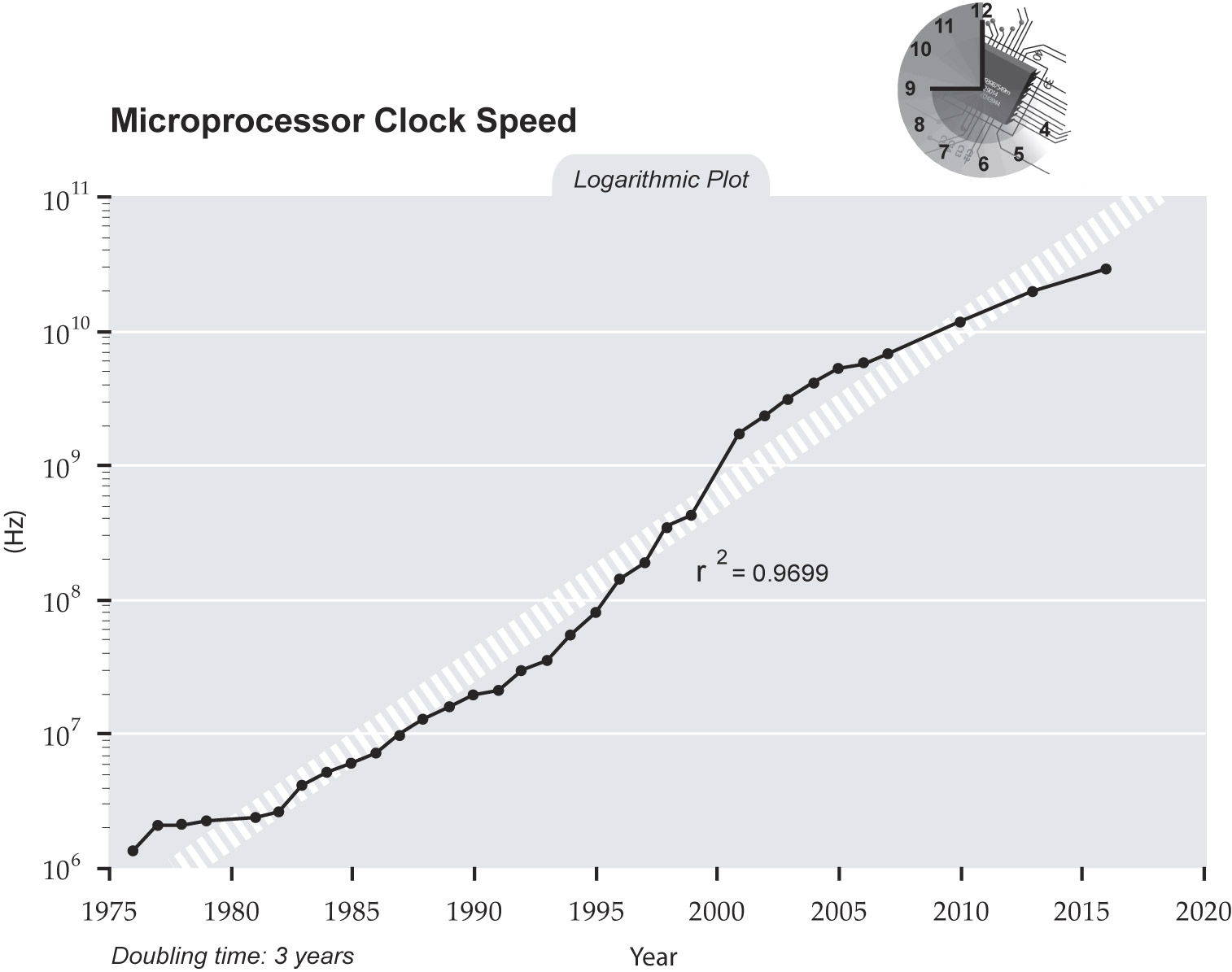

Next Gen Computer ProcessorsBy C. M. - May 2018. Ten years ago we posted an article titled "Chipzilla Tukwila: The Next Generation of Computer Processors", which talked about the next generation of computer processors and also about liquid cooling with nitrogen. You can read the article further below. Since 2008 computer processing power hasn't really gone anywhere. It has basically stagnated. It is very difficult to increase the speed of computers these days. For example one of the recent developments was to simply double or quadruple the number of processors a computer has, resulting in "Quadcore" processors. Beyond that the speeds we see in new computers aren't really that much faster than computers we saw circa 2005. It used to be that every two years processing power would double. And then double again a few years later. What we see these days is a plateau effect, wherein they are only getting marginally faster and the "doubling" has slowed to once every 3 years. The slow down started around 2001 and you could see it continue to slow down in the years that followed.

Looking at the chart you can see how there has been marginal improvements in speed, but increasing the problem these days is making computer chips both faster and in the range of affordability... while also making the chip safe to use so it doesn't overheat and start a fire. Can we overclock the CPU and make it faster using liquid nitrogen? Yes, we can. But why bother? You can watch the video on this topic from 2017 when they set not 1 but 2 new records for overclocking a CPU. The record has since been broken multiple times.

So as I mentioned above, yes, we can overclock the CPU of a chip. Any chip can be overclocked. But should we bother doing that? With overclocking the biggest danger is overheating the chip by accident, it catches on fire, and your computer is ruined. The benefits don't outweigh the risks. Overclocking is basically a hobby for people with too much money and not enough common sense. Miniaturization Another development in recent years is making chips smaller, largely for use in smartphones, tablets and other gadgets. It is possible that the recent focus on miniaturization has slowed down development of faster chips. The money being spent on research being finite, there is only so much to go around, and thus with the increased attention on smartphones/etc there has been more research money being poured into miniaturization. Miniaturization does hold promise for helping the tech industry serve gadgets to the willing masses who want to buy them, but they are also increasingly disposable. Gone are the days when someone buys a computer that they use for 5 years before buying a new computer, perhaps upgrading it once every year or two before eventually deciding to get a new one. These days people get a smartphone instead, which practically doubles as their personal computer, and they get a new one every year or every 2 years (mostly depending on their phone contract). As a result, laptop sales are flat and desktop computer sales are down. Tablets are the new thing, although frankly if you want to write anything big it is still better to do it on a desktop or laptop. The chips in the smartphones are also an issue, as some manufacturers have discovered the chips (and sometimes the batteries) are prone to overheating and catching fire. Searching for a Quantum Computing Solution There are a few researchers out there who are trying to develop computer processing chips which are significantly faster than anything currently on the market, by looking into the whole concept of using the quantum state of atoms to process data faster. The idea is promising, but it is still possibly decades away from a working prototype and eventually a mass market chip. So don't hold your breath for that one. And now, without further delay, we give you...

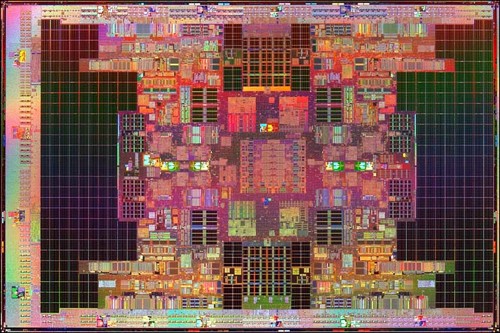

Chipzilla Tukwila: The Next Generation of Computer ProcessorsFebruary 2008. Intel announced early this month it had made an experimental processor chip - nicknamed Tukwila - with an astounding two billion transistors on one piece of silicon. That's an almost fourfold increase in transistors on one substrate since 2004. It has also been called Chipzilla - a take off on Godzilla by industry wags. The first generation of Tukwilas - itself a descendant of Intel's Itanium processor line - was announced in 2006 when it cracked the one billion transistor bar. The new chip is a quad-core chip: four independent processors operating together on one substrate. This works faster than a single processor because it allows each separate core process instructions at the same time, rather than having them queue for a single processor.

Tukwila runs at a modest 2 GHz, less than half the 4.7 GHz in the worlds fastest processor, announced by IBM - in 2007. The IBM chip, however, with 790 transistors, has less than half the number of transistors than the Tukwila. While this certainly makes the new chip a monster processor, its less a revolution than an evolution in processor design. Many of the additional transistors are used for memory and register storage, providing faster access to certain types of data, rather than using the systems RAM. Again that's another trend: to provide more cache memory on the chip rather than depending on separate memory units. That cache 30Mb of storage - is one of the reasons Tukwila can operate at such a low speed: memory components, especially cache memory, don't need to run as rapidly. Since the cache is so close to the processor, the electrons don't have to travel as far either (half the distance equals double the speed, effectively). The CPU speed doesn't reflect the speed of data transfer to and from this cache. Using a technology called QuickPath Interconnect, Intel's Tukwila has a bus transfer speed of about 4.8 GHz. QPI will be integrated into all of Intel's next-generation chips, including the x86 chip upgrades expected later this year. Where Tukwila bucks the trend is in its increased power consumption: a 25 per cent jump over previous generations, and rated at 170 watts.

Don't shelf your old PC quite yet the Tukwila is designed for high-end servers, not home and small business computers. It will not be the next chip for gamers. And its only announced, not in production - it wont be available until mid-2008, and at a price that will have IT managers shaking. Its not the end of the line, either. Tukwila still uses the older 65nm technology, which is much larger than Intel's 45nm technology and twice as large as the still-experimental 32nm dies. The previous generation of Itanium was based on a 90nm technology. It is theoretically possible to cram a lot more components onto the same size substrate by using the smaller laser die. Many pundits are predicting four billion transistors on a single piece of silicon using these small-scale technologies by 2010 about the time the Tukwilas successor, the Poulson, is scheduled for release. At which point we should be expecting a speed of 5 to 6 Ghz and broad market capability.

The Challenge of OverheatingThere is still a problem of overheating processors which can cause your computer to slow down or spontaneously restart. The solution is to have a really good cooling system, but unfortunately liquid nitrogen is still really expensive. But here's a thought. What about freon? The chemical used in refrigerators and air conditioners. We've been using freon for decades now, doesn't it make sense to use it as a coolant as well? Basically all you need is a mini air conditioner where your computer fan normally goes. The alternative is to store your computer in a cold basement because it will go faster and have less shut down problems there, but who wants to sit in a cold basement all the time? Or worse, a freezing meat locker. Theoretically computer speed is governed by three things: Power, miniaturization of the chips and our ability to keep the chips cool. 110 power if we're in North America, 220 if we're in Asia or certain European countries (which theoretically means we will be going at half speed in North America). Tukwila and Poulson above is the advancement of miniaturization, sqeezing more power into a smaller space (and reducing the distance the electrons have to travel), so one of the few remaining obstacles is overheating (which can be really bad if your server crashes). Whatever the future holds, whether it be freon or liquid nitrogen, I have to wonder: Will it ever get fast enough that we stop worrying about speed? No more load times, just instant gratification? I hope so.

|

|

||||||||||||||||||||||||||||||

|

Website Design + SEO by designSEO.ca ~ Owned + Edited by Suzanne MacNevin | |||||||||||||||||||||||||||||||